From Prompts to Proof: Agentic, Auditable Enterprise AI

Across regulated industries, a consistent pattern is emerging. AI initiatives do not fail because the technology falls short. They stall because organizations cannot explain what happened, why it happened, or who is accountable when it matters most.

Enterprise adoption of generative AI has moved well beyond experimentation. Most organizations understand what AI can do. The harder question is whether they can stand behind its behavior once it is deployed inside real operating environments.

In enterprise AI, impressive output is not enough. Evidence is the currency of trust.

The Enterprise AI Trust Gap

Early excitement around generative AI focused on speed, fluency, and surface-level capability. Those attributes remain useful, but they are insufficient for enterprise deployment, especially in regulated environments.

As AI systems move beyond isolated use and begin influencing decisions or triggering actions, a different set of questions emerges:

- What data was accessed?

- What rules or policies were applied?

- What actions were taken?

- What changed as a result?

- Can these decisions be reconstructed and defended months later?

When organizations cannot answer these questions, AI initiatives slow or stop entirely. The issue is rarely model performance. It is accountability.

This gap between capability and explainability is where most enterprise AI efforts quietly fail and where platforms such as ThinkTrends Agentic AI are designed to operate differently, with traceability and governance treated as core system requirements rather than afterthoughts.

Why Prompts Do Not Scale to Enterprise Systems

Prompt-based tools have value. They are effective for exploration, ideation, and bounded assistance. But prompt-to-response interactions were never designed to function as enterprise systems of record or systems of action.

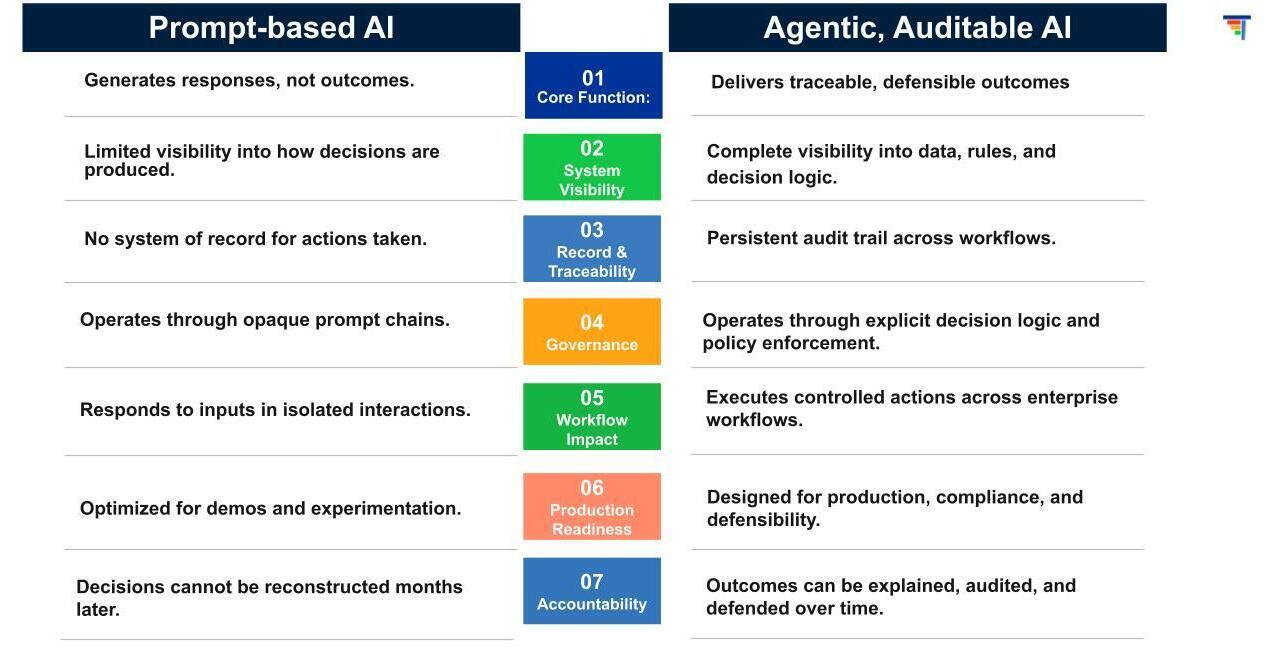

A prompt generates a response. An enterprise system must generate traceable outcomes.

Responding to a prompt is a contained interaction. Acting across workflows, systems, and data sources introduces operational, regulatory, and compliance consequences. As AI begins to operate beyond individual users, risk compounds quickly.

This distinction is critical and often misunderstood.

Many offerings positioned as "agentic" still rely on opaque prompt chains with limited visibility into decision logic, data access, or downstream impact. They may perform well in demonstrations, but they lack the controls required for enterprise-scale operation.

Prompt-driven tools can assist. They are not designed to govern. Systems such as ThinkTrends Agentic AI are purpose-built to move beyond prompt dependency by embedding decision logic, policy enforcement, and auditability directly into how AI operates across workflows.

Agentic AI Requires Governance, Not Just Autonomy

True agentic AI is not defined by autonomy alone. It is defined by controlled autonomy.

Agentic systems are engineered to operate across workflows while remaining bound by explicit rules, policies, and oversight. They are designed to coordinate actions, handle complexity, and operate consistently across systems without sacrificing traceability.

Without governance, autonomy becomes exposure.

As AI systems begin to act rather than merely respond, governance, observability, and auditability move from optional considerations to foundational requirements. Enterprises must be able to see how decisions were made, what data and rules were involved, and how outcomes were produced.

This principle underpins how ThinkTrends Agentic AI approaches enterprise deployment: autonomy is allowed only where visibility, control, and accountability are already in place.

This is not about replacing human judgment. It is about ensuring that systems operating with a degree of autonomy can be trusted, monitored, and defended when decisions affect compliance, safety, or business outcomes.

Auditability Is the Line Between Experimentation and Production

In regulated environments, AI is increasingly evaluated the same way as other enterprise systems. Not by how impressive it appears, but by whether its behavior can be explained, audited, and defended.

Audit logs are not a feature to add later. They are the gatekeeper between experimentation and production.

If an AI system cannot produce a verifiable audit trail, demonstrate how decisions were made, or support investigation and rollback, it will not survive procurement, security, or regulatory review. This is why many AI assistants succeed in pilots but never reach deployment.

Production-ready enterprise AI systems—such as those built on ThinkTrends Agentic AI principles are designed with:

- Explicit ownership of outcomes

- Role-based access and policy enforcement

- Continuous observability across workflows

- Persistent audit trails that hold up over time

These capabilities cannot be bolted on after deployment. They must be embedded from the start.

What "Production-Ready" AI Actually Means

A successful demo does not imply readiness for deployment. The real challenge lies in integrating AI into complex operating environments without introducing unacceptable risk.

Production-ready AI is defined not by model performance, but by whether the system can function as part of the enterprise environment itself. That includes handling divergent data schemas, navigating workflow complexity, enforcing policy consistently, and maintaining end-to-end traceability.

Demos optimize for speed and isolated capability. Production demands accountability.

As AI systems mature, legal, compliance, security, and risk teams ask practical, unavoidable questions:

- Who owns this outcome?

- What data was accessed across systems?

- What controls were applied?

- Can this decision be audited months later?

Agentic, auditable architectures such as those underpinning ThinkTrends Agentic AI are designed to answer these questions by default, rather than retroactively.

From Output to Proof

The future of enterprise AI is not defined by conversation alone. It is defined by systems that can act responsibly, operate transparently, and prove what they did over time.

In regulated environments, readiness is not a milestone reached after success. It is the condition for moving forward at all.

Organizations that recognize this shift early will be able to deploy AI with confidence rather than caution. Those that do not will continue to see initiatives stall at the boundary between experimentation and trust.

In the end, enterprise AI is not judged by what it can say. It is judged by what it can prove.